Introduction

I expain my code for a 2D halo exchange of unstructed mesh blocks (boxes) using message passing interface (MPI). The halo can have any thickness. The periodic condition is also supported. The blocks can have different sizes and location in space. Thanks to MPI self-send/receive, a CPU can run multiple blocks.

Code

The full code is on GitHub.

Prerequisites

This post is continuation of halo exchange for structured blocks.

Problem

Imagine we have 2D Domain made of mesh blocks like below:

Blocks 1a and 1b are run on processor 1, block 2 on processor 2, and block 3 on processor 3.

These blocks need to send and receive border area to form their ghost or halo layer.

It looks a bit complex, how can we set communications between blocks?

Intersection

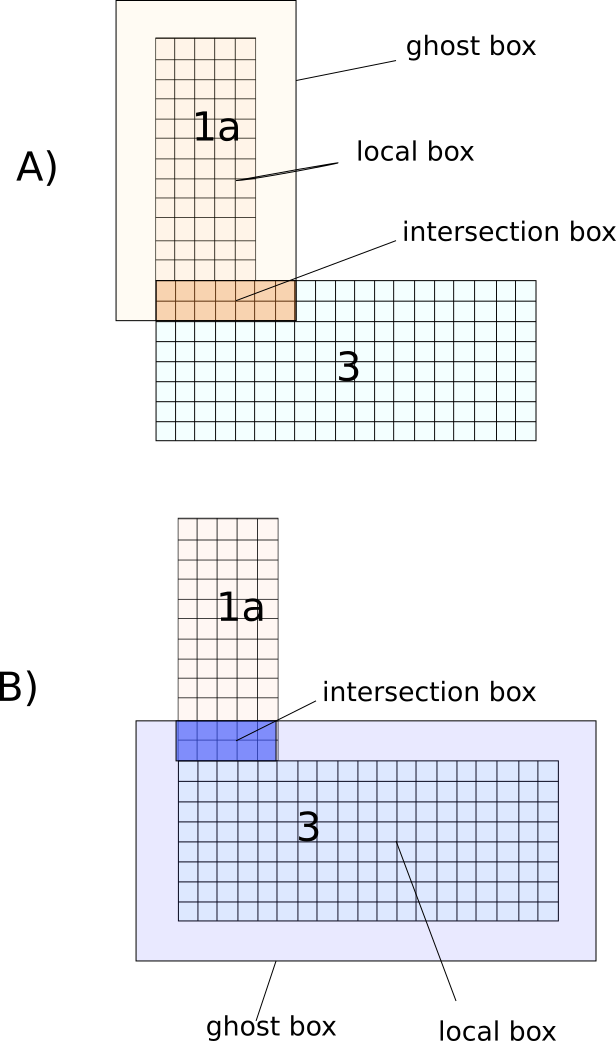

I explain the solution with an example. Let’s only focus on block 1a and 3 and assume the halo thickness is 2 cells:

In the image above, first box 1a is enlarged 2 cells to be its ghost box. The intersection of it with box 3 is found. When the intersection is a subarray of 3, its data is sent to 1a, but when it is a subarray of 1a, it receives its data from 3. In the second figure, the opposite happens, 3 is enlarged to find its intersection with 1a, that gives us the subarray of 1a that is sent to subarray of 3.

That is it! we repeat this procudre for every two blocks in the domain and we record neighbors and their corresponding send-receive subarrays.

Box

To separate concepts, we define Box class to represent boundaries of a block and intersections. The class contains all the necessary functions for our job:

struct Box

{

int ix0 = 0, ix1 = 0, iy0 = 0, iy1 = 0;

std::array<int, 2> GetExtent() const

{

return std::array<int, 2>{ix1 - ix0 + 1, iy1 - iy0 + 1};

}

Box clone() const{/*returns clone of this box*/}

Box &Translate(int dx, int dy){/*moves box by (dx,dy)*/}

Box &Expand(int thickness, Axis axis){/*expand box along an axis*/}

Box &Expand(int thickness){/*expand in all directions*/}

Box &Merge(const Box &other){/*This box grows to contain another box*/}

Box &Intersect(const Box &other){/*intersection of box with another box*/}

void Print() const{/*Pring specs of box*/};

};

The good thing is that Box class is about geometry and nothing else.

Block

A block contains the nodes (or cells) data. It manages a 2D array of nodes. A block array contains the halo (ghost) nodes as well. To initialize a block we pass the physical boundary of a block via a box, but block automatically adds the halo nodes to it. Node ix,iy=(0,0) is the first halo node at the bottom-left of the block. For a domain with halo thickness of 2, the physical node start at ix,iy=(2,2).

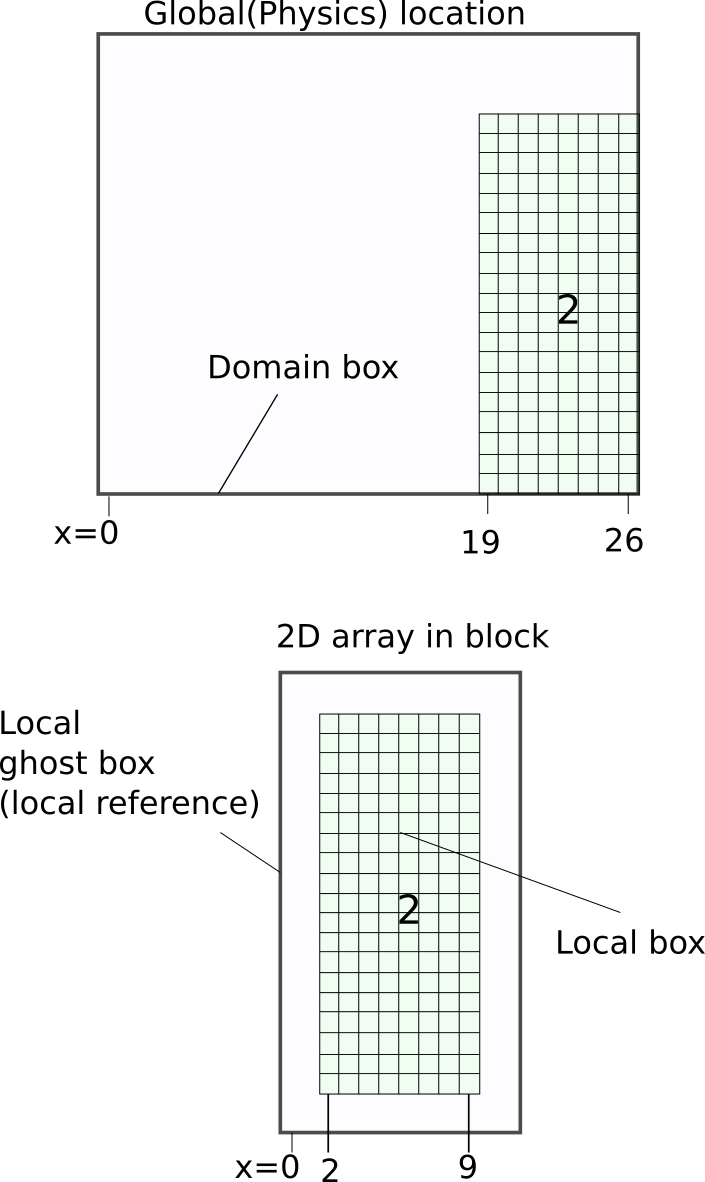

This picture explains the difference between global and local positions. Box 2 extends along x-axis from 19 to 26. But in local reference, in a block, it is between 2 and 9. In the local reference, cells 0 and 1 belong to the halo (ghost layer).

Halo manager

HaloManager class receives a list of boxes (block physical boundaries) and their processor ranks which are called BoxRanks in the code. Then it finds the neighbors and subarrays for each block.

The core function is

auto FindNeighbours(const Box& fbox)

{

std::vector<Neighbor> neighbors;

for (auto &boxRank : boxRanks)

{

if (!fbox.IsEqual(boxRank.box))

AddNeighbour(fbox, boxRank, neighbors);

for (size_t dir = 0; dir < 9; dir++)

{

bool isThere = false;

auto pbox = GetPeriodicBox(boxRank.box, (Dir::Type)dir, isThere);

if (isThere)

AddNeighbour(fbox, BoxRank{pbox, boxRank.rank}, neighbors);

}

}

return neighbors;

}

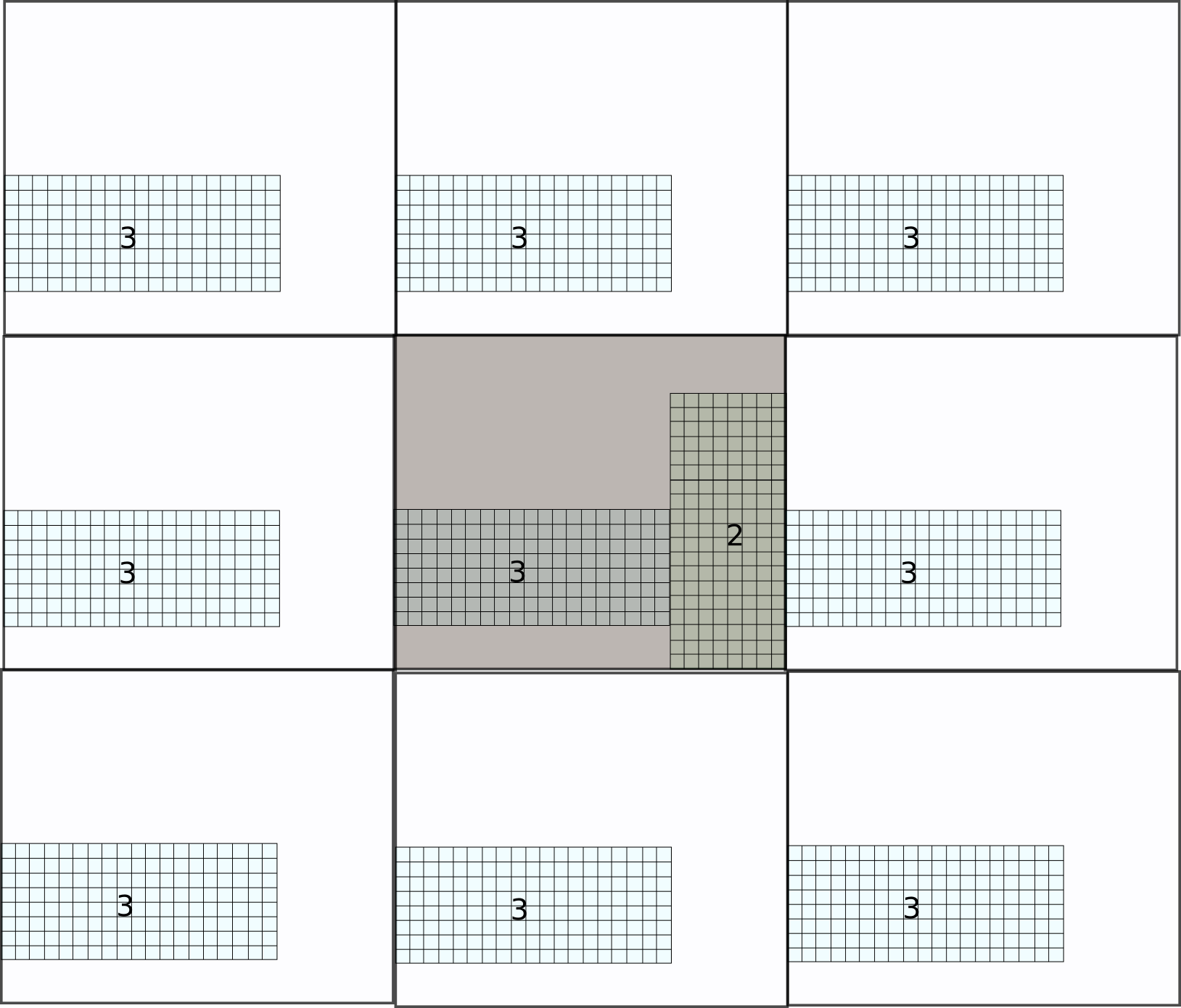

where a focused box (or block) is received and all neighboring blocks are assessed to see if they have an intersection with it. If there is periodic boundary, it also checks the periodic image of the neighbors in all 8 directions:

For example the intersection of block 2 with block 3 and its periodic images are found like this figure:

The detail of adding a neighbor to our list is in this function:

void AddNeighbour(const Box& fbox, const BoxRank &boxRank, std::vector<Neighbor>& neighbors )

{

auto ghostBox = ToGhostBox(fbox);

auto localGhostBox = RelativeToGhostBox(fbox, ghostBox);

auto inbox = ghostBox.clone().Intersect(boxRank.box);

auto outbox = fbox.clone().Intersect(ToGhostBox(boxRank.box));

if (inbox.IsValid() && outbox.IsValid())

{

auto intag = HashBox(PeriodicBoxToInDomainBox(inbox));

auto outtag = HashBox(PeriodicBoxToInDomainBox(outbox));

auto localInbox = RelativeToGhostBox(fbox,inbox);

auto localOutbox = RelativeToGhostBox(fbox,outbox);

neighbors.push_back(Neighbor{

.rank = boxRank.rank,

.box = PeriodicBoxToInDomainBox(boxRank.box),

.inType = MakeMpiType(localGhostBox, localInbox),

.outType = MakeMpiType(localGhostBox, localOutbox),

.inTag = intag,

.outTag = outtag});

}

}

- inbox: the intersection (subarray) that our focused box is receiving.

- outbox: the intersection (subarray) that our focused box is sending.

- intag, outtag: we need some tags for when a box interact with itself or other boxes in two directions due to periodic boundaries. Look at block 2 in previous figure, it sends to and recevies from left and right of block 3. With tags, messages don’t get mistakenly swapped.

- rank: MPI process Id of a box (or block).

- localInbox, localOutbox: we need them because at the end of the day we are working with arrays that their index start from zero. x

- inType, outType: the in/out subarrays should be defined as MPI type.

Communications

We have Communication class that tells a block to send its boundary data to the halo of neighbors and receive boundary data of neighbors at its halo:

class Communication

{

auto Communicate(const Neighbor &nei)

{

MPI_Request recvRequest;

MPI_Request sendRequest;

MPI_Irecv(&block(0, 0), 1, nei.inType, nei.rank, nei.inTag, MPI_COMM_WORLD, &recvRequest);

MPI_Isend(&block(0, 0), 1, nei.outType, nei.rank, nei.outTag, MPI_COMM_WORLD, &sendRequest);

return std::array<MPI_Request, 2>{sendRequest, recvRequest};

}

void CommunicateAsync(){/*Communicate with all neighbors*/}

void AwaitRecv() {/*Wait to receive messages*/}

void AwaitSend(){/*Wait to send messages*/}

void Await(){/*Wait for both send & receive*/}

void Communicate(){/*Communicate to all neighbors and wait for it*/}

};

CpuBlocks

CpuBlocks class is created to wrap everything in a higher level interface. It stores a list of communications where each one focuses on one block and its neighbors. CpuBlocks also runs communications for all blocks.

class CpuBlocks

{

private:

std::vector<Communication<T>> comms;

public:

std::vector<Block2d<T>> blocks;

CpuBlocks(const std::vector<BoxRank> &boxRanks, std::array<bool, 2> isPeriodic, size_t overlap)

{

HaloManager haloManager{boxRanks, isPeriodic, overlap};

int rank, size;

MPI_Comm_rank(MPI_COMM_WORLD, &rank);

for (auto &&boxRank : boxRanks)

if (boxRank.rank == rank)

blocks.emplace_back(boxRank.box, overlap);

for (auto &&block : blocks)

{

auto neighbors = haloManager.FindNeighbours(block.GetGlobalOwnBox());

comms.emplace_back(block, neighbors);

}

};

void CommunicateAsync(){/* for all comms*/}

void Await(){/*Wait for all communications*/}

void Communicate(){/*Communicate sync way*/}

};

Examples

The code contains one example that shows how to work with the interface.